In this guide we’ll teach you what makes good usability testing questions and how and when to ask them.

How to write usability testing questions (and tasks)

Asking the right questions in your study and being able to formulate comprehensive tasks is truly an art of language. Questions and tasks aren’t always easy to write, but if you manage to do it, the insights you get from the test will be priceless.

A good usability testing question is:

- short and clear

- direct

- lacking complicated grammar and slang

- unbiased

Below we gathered some of the best practices that will assist you with writing good usability testing questions and tasks.

In case you prefer learning about this topic by watching a video you can watch this quick summary we prepared for you:

Create tasks that address your goals

When writing your tasks, focus on covering some of the most important functions of your website. These are the ones you want to test.

What do you want your users to do?

What do they usually look for on your website?

Assume we have an e-commerce website with a shopping cart, and one of our goals is to see if users can add items in the cart. Here’s an example of a good task aimed at testing this goal: “You are looking to purchase a new digital camera. Find the one you like and make the purchase.”

This task is actionable, realistic and perfectly covers your objectives.

Analyzing the study results and session recordings will bring you the following insights:

- How many people have successfully bought a new camera?

- How long did it take them to make a purchase?

- What was the buyer’s journey?

- Did they face any difficulties when trying to make a purchase?

Replicate real life scenarios

Best tasks are always the ones that can replicate a respondent’s real-life behavior. When the person is able to identify themselves with the task the study becomes more realistic, therefore, bringing you more accurate results.

For example, when you want to find out if the users are able to purchase a digital camera on your e-commerce website, it will be a good practice to recruit people that are likely to buy electronics for themselves.

Always formulate your tasks as scenarios to help respondents get into the right mindset for taking on the tasks. This helps to introduce the problem to the respondent and encourages them to seek a solution.

While the first version of the task instructs respondents to find something mechanically, the second one allows them to comprehend the problem, think about it beyond the surface, and consider how they would approach it in real life.

Giving respondents some freedom to define their goals is another way to help them truly identify with the tasks.

So instead of asking respondents from your target market to:

“Buy the least expensive television in the inventory.”

you could recruit respondents who are actually in the process of looking for a new TV and ask them to:

“Find a TV that you like and buy it.”

Make it actionable

In usability testing, it’s always better to ask respondents to do something rather than ask them how they would do it.

For example, “How would you purchase a digital camera on this website?” is a bad task.

A much better one would be: “Find the one you like best, then complete the purchase”

This kind of usability testing questions trigger users’ actions, instead of just a verbal response. People’s words can be rather different from their actions in real life, therefore, self-reported claims tend to be inaccurate. Asking actionable questions will help you obtain real insights, not just some vague assumptions.

Don’t give away the answer

Our brain has the ability to uncover patterns and shortcuts that help it find simple solutions to complex problems. As a result, if your task includes any hints that may assist respondents in completing it faster, they will use them. Here’s a simple example:

The same applies with questions. If your question makes it obvious what kind of answer you are looking for or which answer is the correct one, the respondents will be more likely to pick it. Giving an example in a free-text question may lead to answers that mirror your example, instead of the respondents truly expressing their attitudes or observations.

Types of usability testing questions

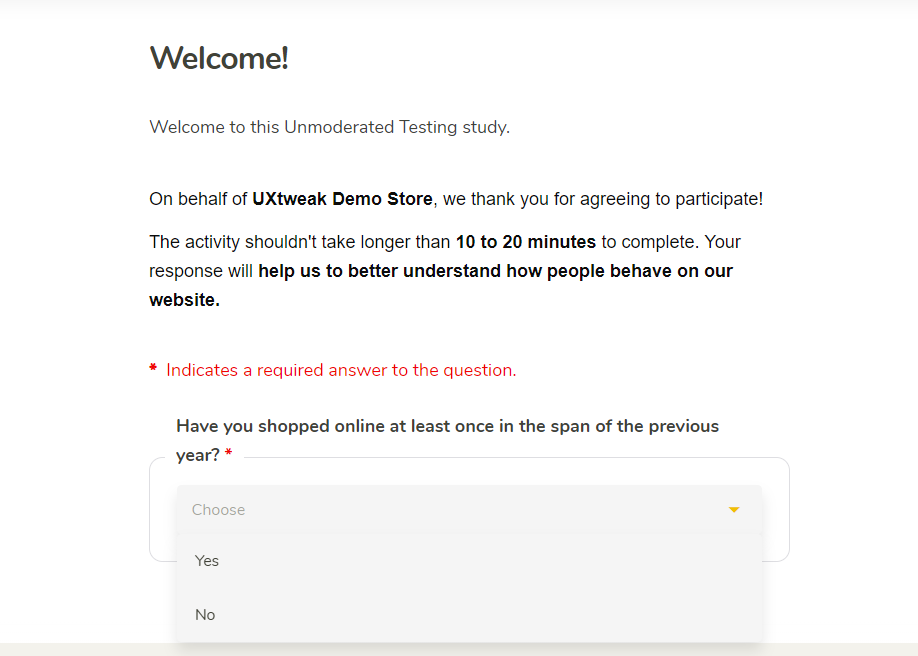

Screeners

A screener will be the first question your respondents see when they open the study. It helps you to filter out the testers that do not represent your target audience and focus on the answers that are relevant to your study. Adding a screener to your usability test is a great way to assure the accuracy of the future results. Moreover, it prevents you from wasting your and the respondents’ time.

For example, if you are testing a website and your target audience are dog owners, you would want your testers to have a dog.

Therefore, a screening question for that study would be:

“Do you have at least 1 dog?”

- Yes

- No

Alternatively though, to make it less obvious to respondents which answer will let them enter the study, a more general screening question is recommended:

“Do you own a pet? If so, which kind of animal?”

- Don’t own a pet

- Dog/dogs and no other animals

- Cat/cats and no other animals

- Dog/dogs and cat/cats

- …

Example of a screening question in UXtweak Website Testing Demo Study:

Check out the examples of how different types of usability testing questions look inside the study in our demos.

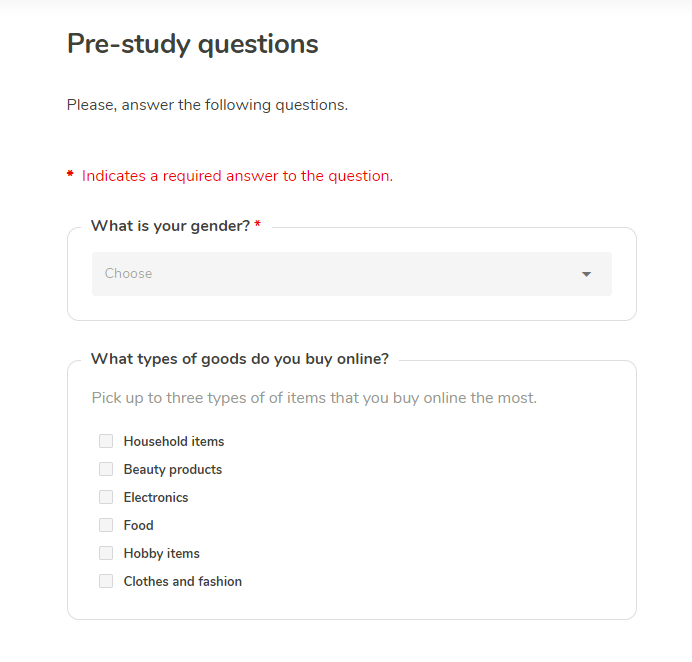

Pre-study questions

The primary objective of the pre-study questions is to learn about the respondents’ backgrounds and previous experiences. This is your chance to collect information that will help you empathize with the users and get to know them better. Depending on the usability testing method you choose, the pre-study questionnaire can be done in the form of a survey, or as an interview.

Try to avoid questions that could affect the user’s behavior for the rest of the study, such as by giving away what the study’s purpose is, leading the users to focus more on things they normally wouldn’t spare a second thought.

Use pre-study questions to gather information about user demographics, experiences and impressions of your product or brand:

What is your current occupation?

How often do you shop online?

Are you familiar with this brand?

Example of pre-study questions in UXtweak Website Testing Demo Study:

Intra-study questions

Intra-study questions are used to get more details on the respondent’s behavior during the study, to find out why they did a certain thing and to motivate them to verbalize their actions.

It’s often hard to understand why the respondent clicked a certain item during the test or what part of the interface confused them. Asking intra-study questions will help you get into the user’s mind, find reasons for their actions and pin-point the hidden problems. Leave intra-study questions to after the current task has been completed.

Asking respondents to verbalize their inner thoughts will force them to think about things more deeply, which may cause them to realize things they wouldn’t during a normal interaction, affecting their behavior.

In moderated testing, the moderator will be able to come up with intra-study questions dynamically for each respondent. In unmoderated testing, it’s key that you craft after-task questions that extract as much information from the respondents as possible.

Ask them to share their current thoughts and opinions:

What did you expect to happen when clicking on this item?

What is your opinion on the product’s design?

Do you find this feature helpful or unhelpful?

What do you think of …

If you were looking for …, where would you expect to find it?

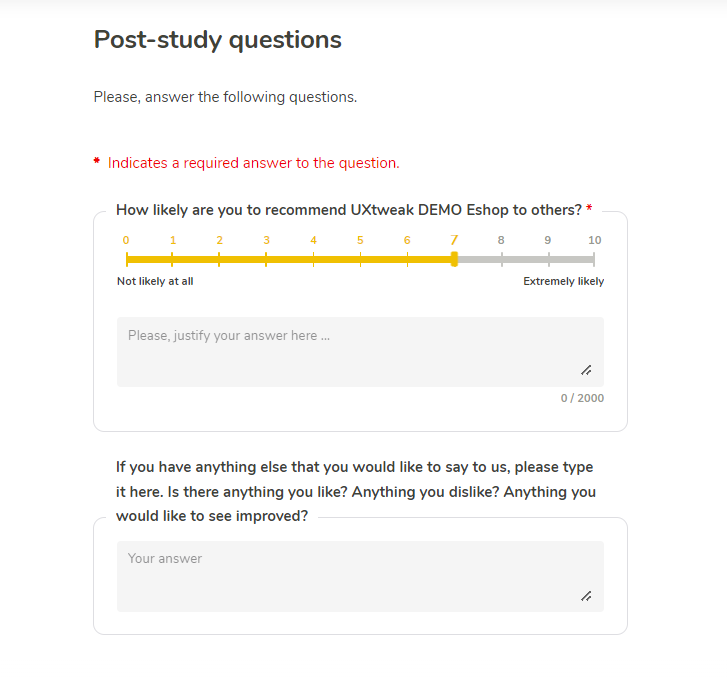

Post-study questions

Post-study questions give you a chance to ask testers about their overall experience with the product/service and the usability test. Here you can also ask for some additional details about the tasks, if the test was easy and comprehensive. Apart from that, it’s a great practice to givetesters a small questionnaire about the study right after it’s finished and everything is still fresh in their heads.

You can ask questions like:

What is your overall impression of the test?

How would you rate the difficulty of using this app on a scale? (1 = very difficult, 5 = very easy)

What did you like the most/the least about the app?

Did you feel confused at any point? If so, explain what happened.

On the scale from 1 to 5 (1 = very satisfied, 5 = very dissatisfied) how would you rate your experience with the product?

Example of post-study questions in UXtweak Website Testing Demo Study:

How to avoid bias in your usability testing questions

When preparing a questionnaire, bias is your worst enemy. Poorly formulated questions will mess with the respondent’s minds and lead them to answer the opposite of what they actually think. Thus, skewing your research and overcomplicating the data analysis process.

We’ve gathered some helpful tips to follow in order to avoid bias in your next study.